Talking Machines, Streaming Data: The World of IoT Analytics

3AI November 13, 2020

Digital Business, which primarily brings an unprecedented convergence of people, business and things, is upon us at present. Application of Analytics, accordingly brings us Descriptive & Diagnostic Analytics currently entering mainstream with most of the companies taking the plunge towards Data Discovery, Mining, Business Intelligence and Data Visualization & Reporting as the first step towards making their company completely data driven and realizing the full potential of Digital Business. The next phase of Predictive Analytics, though lesser in adoption, is actively being pursued as a viable option by three fourth of the relevant businesses as part of the next stage of Digital Evolution. The forward-thinking businesses are but, looking farther, into Prescriptive Analytics, teamed with Digital Evolution to Connected Things, and rise of Self-Learning Algorithms which will pave way for the Digital of the future – Autonomous Systems and Self Learning & Evolving Tech. The evolution will witness a very distinct change. The primacy of historical data will be overtaken by real-time data, generated by a large array of interconnected things.

In the future, as and when the life-cycle of trends keep getting shorter and shorter, predictive forecasting using historical data will keep losing its luster and the new normal would be to quickly understand the trend upon us of that time, analyze it in real-time and gather insights, only to be converted to targeted prescriptive measures, all happening in automated fashion. The staple tech for autonomous systems would be the Internet of Things (IoT) which would be the infrastructure, as well as the customers, since they work, interact, negotiate and decide with zero human intervention. However, the IoT data loses its value if it is not detected and acted upon immediately. That is where streaming analytics platforms come into the picture. Just as the database management opportunity gave birth to a wide range of database technologies and Big Data needed Hadoop, the real-time enterprise and IoT applications need development tools and processing capability to support real-time streaming analytics.

Real-time streaming analytics enables collection, integration, analysis, and visualization of data in real-time without disrupting the working of existing sources, storage, and enterprise systems.

Real-time Streaming analytics is completely different from the traditional analytics approach which involves batch processing of Historical data, which also has been witnessed by Big Data. In traditional analytics, analytic queries are run on batch historical data. Like in case of Hadoop-based batch processing, there is a definitive time-lag that would happen between the collection and storage of data sets, the steps for analysis, and the final stage of reporting. In real-time streaming analytics, the query is always stored and remembered, and every time the data changes, the analysis outcome changes based on the changed data set. This can enable high volumes data processing in little time.

Characteristics of Real-time Streaming Analytics

- Analysis is happening in Real-time, as and when the data arrives, or as and when there is a change in the already conditioned dataset. The data processing could be of two types:

- Routine operations such as pre-processing followed by monitoring, reporting, statistics, and alerts

- Decision-based, with instantaneous score generation based on predictive analytics models.

- The possibility of immediate analysis and execution of prescriptive action which calls for learning algorithms and prescriptive analytics.

- Multi-channel data pipelining which would capture all incoming data, events, transactions, data outputs, and machine-to-machine communications, further leading to efficient inspection, analysis, storage, and purge of raw data.

- Event Storage in parallel to real-time analysis.

Business Value

Real-time streaming analysis of data enables certainty of business decisions, with a confidence that subsequent actions are rooted in a relevant, timely understanding of the unfolding events. With the data-waiting time effectively becoming Zero, and nothing getting lost, overseen or outdated, the velocity and volume of data is not an issue. The results of the analytics are translated and fed back into the local systems in real-time, which means the time lag between the incoming data and the outgoing data is extremely low .

Real-time streaming analytics also helps businesses by:

- Providing real time visualizations of business: Streaming analytics platforms come with dashboards to help visualize, not only the vast quantity of data from multiple sources, but also give a clear view of how the scenario changes in real-time.

- Immediate Automation: Streaming analytics platforms are idle till it detects an immediate risk, changed data or opportunity. Developers can design applications focusing on event based alerts alerts through email, push notifications, message queues, or service calls.

- Detecting urgent situations: Application developers and business analysts can use the tools provided by streaming platforms to define analytical patterns of real-time business events.

- Cutting preventable losses: Real-time streaming analytics helps to avoid preventable losses through early detection of at-risk situations.

Value Addition by Real-time Analytics

Forrester defines streaming analytics platform as a “software that can filter, aggregate, enrich, and analyze a high throughput of data from multiple disparate live data sources and in any data format to identify simple and complex patterns to visualize business in real-time, detect urgent situations, and automate immediate actions.” Real-time streaming analytics should be sensitive to business concerns such as costs, TTM, & resource demand. At the highest level, it is an “always on” infrastructure that senses all business critical data, events, and transactions accurately, analyzes them, and is linked to appropriate and immediate actions.

Real Time Analytics

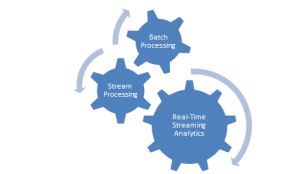

In execution, this loop of sense-analyze-act can be achieved through a design built around three core components:

- Stream processing, which intakes multiple high-volume data streams at a high speed, routing received data in parallel for real-time analytics and batch processing

- Real-time analytics, which runs diagnostic & predictive analytics models, and triggers appropriate and immediate prescriptive actions

- Batch processing, which stores and processes data-feeds offline and could build or update the predictive models used for real-time scoring