Indusrty 4.0 enabled by Data Sciences

3AI January 13, 2021

The factory environment is a data scientist’s paradise: both highly multivariate and relatively quantifiable.” – Travis Korte

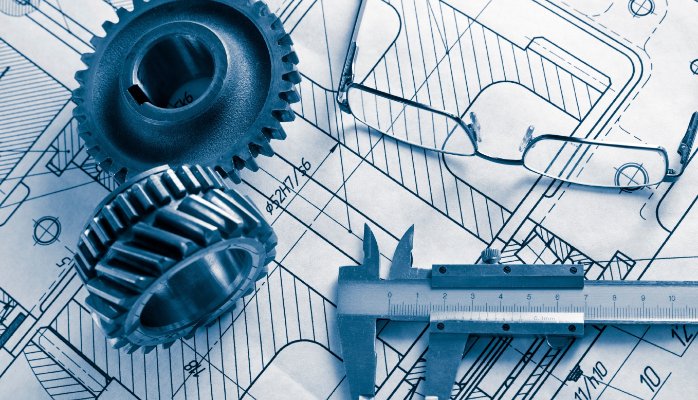

In the past 20 years or so, manufacturers have been able to reduce waste and variability in their production processes and dramatically improve product quality and yield (the amount of output per unit of input) by implementing lean and Six Sigma programs. However, in certain processing environments—pharmaceuticals, chemicals, and mining, for instance—extreme swings in variability are a fact of life, sometimes even after lean techniques have been applied. Given the sheer number and complexity of production activities that influence yield in these and other industries, manufacturers need a more granular approach to diagnosing and correcting process flaws. Advanced Data Science provides just such an approach.

Companies like Ford and GM are integrating huge quantities of data – from internal and external sources, from sensors and processors – to reduce energy costs, improve production times and boost profits.

Even smaller businesses are seeing the benefits:

- Big data is cheaper and cheaper to store

- Analytics software is increasingly sophisticated and widespread

- Manufacturers have access to parallel processing machines

In an environment with no room for error, each turn of the screw counts.

Raytheon learned this when they implemented MES (manufacturing execution systems), a software solution that collects and analyzes factory-floor data.

By studying their data, Raytheon were able to determine that a screw in one of the components must be turned thirteen times. If it is turned only twelve times, an error message flashes and installation shuts down.

Like every other manufacturers, Raytheon is benefiting from the fact that complex robotics and automation have replaced humans on the factory floor. Machines imbedded with sensors are constantly conveying high-quality data.

When properly parsed by data scientists, this information can be used to:

- Predictively model equipment failure rates

- Streamline inventory management

- Target energy-inefficient components

- Optimize factory floor space

What’s changed over the last 5 years is the availability of data. Sensors are automating the collection of data and providing data about machines that was previously unavailable. As more machines are connected to a network or the internet, companies now have the ability to easily access this data. This is the Industrial Internet of Things (IIoT). GE and Cisco are massive believers in sensors and connectivity driving improvements in productivity for manufacturing.

Most clients stop at this point as say, “I’ve had sensors and control centers for as long as I’ve been here. How is this anything different?” Here’s what’s changed:

- The number of sensors per machine has increased which has automated a lot of manual data gathering and provides previously unavailable data.

- These larger datasets are easier to get from the machine to a central database because the machine is connected to the network.

- These larger datasets are being aggregated by the companies that make the machines allowing for anonymous cross-company sharing of machine performance data.

- High performance computing platforms like Spark and cloud services like Amazon or Microsoft’s Azure allow businesses of any size to store, mine and analyze these large datasets.

Prescriptive Data Sciences In Manufacturing

How does that move manufacturing closer to the goal of 0 unplanned downtime? The problem with older models is they had significant blind spots. The available data didn’t provide a complete picture of the machine in action and that led to frequent failures in the model. The IIoT is aimed at providing a more complete picture of the machine in a variety of environments to eliminate these blind spots. Predictive models rely heavily on compete, high quality data for accuracy. Older models would predict a machine failure based on an average and could me months off. Newer models based on IIoT data can be accurate to the week or even day.

Event Driven Data Sciences

There are two ways to make that happen. The first is event detection. When we mine data from sensors within the engine we start to get an idea of how the components interact with each other over time. Add data from several engines together and the number of interactions that are known gets large enough for us to start detecting patterns. These known patterns that lead to events we’re interested in trigger an alert that allows a person to review the data and determine a course of action.

What about infrequent events? The less frequently an interaction happens, the fewer times we see it in the data and the less we understand it. Here’s where the concept of blind spots in predictive modeling comes from. Interactions that occur frequently in the available data have well mapped outcomes. We don’t understand all the outcomes from less frequent and unobserved interactions leading to uncertainty in the predictive model.

There are two ways to overcome this. The first is to gather a large enough dataset that even infrequent interactions happen enough to be measured. The second is experimentation where the infrequent events are intentionally recreated and measured.

Internet of Things

And that’s just the internal data. Imagine, if you will, a world where machines bypass humans and speak directly to each other.

- Heating systems consult with weather channels, cellphones and cars to determine when they should fire up the furnace.

- Tractors use data from satellites and ground sensors to decide how to much fertilizer to spread on a certain field.

RFID readers, tags and sensors have become an integral part of manufactured objects, able to relay data to each other at the drop of a hat. GE is particularly interested in the possibilities. In 2013, it announced that it was more than doubling the vertically-specialized hardware/software packages it offers to connect machines and interpret their data.

The goal is to reduce the amount of unplanned downtime for industrial equipment (e.g., wind turbines) and avoid potential problems (e.g., power grid outages). The trick is going to be ensuring that all of these objects are speaking the same language.

Smart Manufacturing and NNMI

Having suffered right along with manufacturers during the Great Recession, the government is doing its best to help. In 2012, the Obama administration proposed a National Network for Manufacturing Innovation (NNMI), modeled after the Fraunhofer Institutes in Germany.

The proposal calls for series of public/private partnerships between U.S. industry, universities and federal government agencies. These partnerships would be focused on developing and commercializing new manufacturing technologies.

NNMI’s 2012 pilot institute was the National Additive Manufacturing Innovation Institute (NAMII), led by the National Center for Defense Manufacturing and Machining. In 2013, it announced the establishment of three new IMIs, each with a separate focus:

- Digital manufacturing

- Composite materials

- Next-generation energy sources

Machine Learning for the Systems Approach To Predictive Analytics

The second approach to predictive analytics is the systems approach. Rather than detecting the patterns that lead to events, the systems approach detects the causes of events. In our aircraft engine example, the systems approach would look at the root causes of failure rather than the events which led to the failure. By teaching the model what causes failure it can detect patterns that lead to failure even if it’s never seen them before.

This is where machine learning methods become useful for predictive analytics. People can look at a situation they’ve never been in before and make some educated guesses about how to react. An engine mechanic can see a wear pattern on a part for the first time and make an educated guess that it indicates a problem based on their experience. Machine learning teaches computers to do the same thing in very narrow sets of situations. For that reason you’ll often hear it call narrow artificial intelligence. We use machine learning to give a predictive model a set of heuristics. It uses those heuristics to choose an action even when faced with event series it has never encountered before.